Disinformation and war. Verification of false images about the Russian-Ukrainian conflict

David García-Marín, Guiomar Salvat-Martinrey

Disinformation and war. Verification of false images about the Russian-Ukrainian conflict

ICONO 14, Revista de comunicación y tecnologías emergentes, vol. 21, no. 1, 2023

Asociación científica ICONO 14

Desinformación y guerra. Verificación de las imágenes falsas sobre el conflicto ruso-ucraniano

Desinformação e guerra. Verificação de imagens falsas do conflito russo-ucraniano

David García-Marín  * david.garciam@urjc.es

* david.garciam@urjc.es

Universidad Rey Juan Carlos, Madrid, Spain

Guiomar Salvat-Martinrey  ** guiomar.salvat@urjc.es

** guiomar.salvat@urjc.es

Universidad Rey Juan Carlos, Madrid, Spain

Received: 02/september /2022

Revised: 27/october /2022

Accepted: 28/december /2022

Published: 03/march /2023

Abstract: The aim of this paper is to examine the visual disinformation produced in the context of the Russian-Ukrainian war, analyze its international scope, study the reaction of fact-checking journalism and compare the disinformation strategies adopted by both contenders. A descriptive and inferential statistical quantitative research of the fake visual content verified by fact-checkers between January and April 2022 was conducted. The results confirm the dominance of the strategy based on false context, the relevance of Facebook and Twitter in the distribution of fake news, and the production of an increased number of fake images during the two weeks following the invasion. The most frequent narratives are those consisting of false decisions and military attacks that seek to impute atrocities and war crimes to the opposing side. Pro-Russian visual disinformation makes greater use of fabricated content. If the concentration of messages on a few platforms is the logic that characterizes the Ukrainian disinformation, Russia utilizes a more expansive strategy by using a greater variety of media to spread their narratives. Stories about fake attacks are more usual in Ukrainian visual disinformation. Fake news stories about the international community's reaction to the Russian attack are more prevalent in pro-Kremlin disinformation. It is also observed that as the conflict progresses, fake content from the Ukrainian side is less frequent, while Russian disinformation increases in proportion.

Keywords: Visual disinformation; Hybrid war; Fact-checking; Russia; Ukraine; Fake news.

Resumen: El presente trabajo tiene como objetivo caracterizar la desinformación visual producida en el marco de la guerra ruso-ucraniana, analizar su alcance internacional, conocer la reacción del periodismo de verificación y comparar las estrategias desinformativas de ambos bandos. Se realizó un estudio cuantitativo estadístico descriptivo e inferencial de todo el contenido falso visual comprobado por las entidades internacionales de verificación entre enero y abril de 2022. Los resultados confirman el predominio de la estrategia del falso contexto, la centralidad de Facebook y Twitter en la distribución de información falsa y una mayor presión desinformativa durante las dos semanas posteriores a la invasión. Dominan la escena las narrativas consistentes en falsas decisiones y ataques militares que pretenden imputar al bando contrario la comisión de atrocidades y crímenes de guerra. La desinformación visual prorrusa hace mayor uso del contenido inventado. Si la concentración de los mensajes en pocas plataformas es la lógica que caracteriza al bando ucranio, desde el lado ruso se observa una estrategia más expansiva al utilizar mayor variedad de medios para distribuir sus narrativas. Los relatos sobre falsos ataques son más frecuentes desde Ucrania, mientras que las noticias falsas sobre la reacción de la comunidad internacional ante el ataque ruso son más prevalentes en la desinformación favorable a Moscú. Se corrobora también que conforme avanza la contienda, el contenido fake del lado ucraniano es menos frecuente, a la vez que la desinformación rusa aumenta su proporción.

Palabras clave: Desinformación visual; Guerra híbrida; Fact-checking; Rusia; Ucrania; Fake news.

Resumo: O objetivo deste trabalho é caracterizar a desinformação visual produzida no contexto da guerra russo-ucraniana, analisar seu alcance internacional, conhecer a reação do jornalismo de verificação de fatos e comparar as estratégias de desinformação de ambos os lados. Foi realizado um estudo estatístico quantitativo descritivo e inferencial de todo conteúdo visual falso comprovado por verificadores de fatos entre janeiro e abril de 2022. Os resultados confirmam o domínio da estratégia do falso contexto, a centralidade do Facebook e do Twitter na distribuição de informações falsas e o aumento da pressão desinformadora durante as duas semanas seguintes à invasão. Narrativas que consistem em falsas decisões militares e ataques que procuram imputar atrocidades e crimes de guerra ao lado adversário dominam a cena. A desinformação visual pró-russa faz maior uso do conteúdo fabricado. Se a concentração de mensagens em algumas plataformas é a lógica que caracteriza o lado ucraniano, uma estratégia mais expansiva é observada no lado russo, que utiliza uma maior variedade de meios de comunicação para distribuir suas narrativas. As histórias de ataques falsos são mais freqüentes na desinformação visual ucraniana. As falsas notícias sobre a reação da comunidade internacional ao ataque russo são mais prevalecentes na desinformação pró Moscou. Também é corroborado que à medida que o conflito avança, o conteúdo falso do lado ucraniano é menos freqüente, enquanto a desinformação russa aumenta em proporção.

Palavras-chave: Desinformação visual; Guerra híbrida; Verificação de fatos; Rússia; Ucrânia; Notícias falsas.

1. Introduction

On February 24, 2022, the Russian President Vladimir Putin ordered the invasion of Ukraine, thus starting the first European war of the XXI century and the first armed conflict on the continent in the current era of disinformation, an aspect which has once again become a major element in the propaganda of the struggle. In this regard, “propaganda techniques have always tended to ease the furthering of the idea of war rather than that of peace” (Thompson, 1999, p. 11). This relationship between armed conflicts, propaganda and disinformation has been widely documented, especially since the First World War. That conflict marked the commencement of the systematic development of censorship and the utilization of information for warlike ends, a strategy that has since “acquired the rank of real science” (Pizarroso Quintero, 2005, p. 30). The combatant countries’ newspapers took up positions of support for their armies in order to propagate fake news that “eluded defeatism to meet the goal of raising the soldiers’ morale and counteracting war-weariness in public opinion” (Pérez-Ruiz & Aguilar-Gutiérrez, 2019, p. 104). Disinformation campaigns went as far as to generate denigrating stories about the enemy side, giving rise to what has been termed ‘atrocity propaganda’ (Robertson, 2014).

Several authors have focused their attention on the use of propaganda in armed conflicts from before the XX century. Pizarroso Quintero (2008) analysed Spanish and French press coverage of the Peninsular War (1808-1814), placing Napoleon as a master of the art of propaganda. Wartime disinformation has made intense use of the visual media available at the time in order to further its aims. The work of López Torán (2022) looked closely at British manipulation techniques through the use of photography from the battlefront during the Crimean War between 1853 and 1856. Other studies have focused on the use of film as an instrument of propaganda, particularly during the Second World War, in efforts to “provide public opinion with a distorted vision of what was happening on the field of battle” (Díaz Benítez, 2013, p. 53).

In this area, fake news is configured as an essential component of the hybrid war being waged by Russia, consistent in its current form with the use of “disinformation as a weapon of war and the social networks as infinite trenches” (Morejón-Llamas et al., 2022, p. 2). Russian disinformation strategies in the XX century have been widely studied using generic approaches by, among others, Pupcenoks & Seltzer (2021) and Jankowicz (2020). Such techniques have developed parallel to the technological developments applied to communication. According to Colom-Piella (2020), the methods currently adopted by the Kremlin’s disinformation campaigns are both diverse and complex. On one hand, they are based on the dissemination of false or manipulated news, as well as the diffusion of illegally-obtained personal information in order to weaken internal and external political adversaries. Online media and platforms in different languages are also utilised to favour the country’s international image. Furthermore, the massive use of clandestine media for disinformation, via webs or blogs for the propagation of invented stories or conspiracy theories, has been documented. Such blogs operate on the Internet with the aim of enhancing the reach of false content through groups of hackers, trolls (Van der Vet, 2021) and automated bots acting on the social networks.

These strategies have reached into numerous Western countries. There have been several studies analysing Russian interference in the US elections in 2016 (Inkster, 2016; Ziegler, 2018; Hjorth & Adler-Nissen, 2019). The European Union has also been a frequent objective (Magdin, 2020), especially countries such as the UK (Richards, 2021), the Czech Republic and Slovakia (Rechtik & Mares, 2021) and Spain, where a large part of the destabilising operations promoted by the Russian media conglomerate has been focused on the issue of Catalan independence (López-Olano & Fenoll, 2019).

1.1. Russia against Ukraine: the (dis)information war

However, due to its geopolitical aspects, Ukraine has been the main objective of the Kremlin’s modern disinformation strategies. Pro-Russian disinformation concerning Ukraine has been studied by, among others, Golovchenko et al. (2018), Erlich & Garner (2021) and Erlich et al. (2022). Campaigns based on propaganda and malicious information have been established since at least the time of the Euromaidan disturbances in 2013 and Russia’s occupation of the Crimean Peninsula (2014), this last territory being the object of a historical dispute between the two countries.

Ukrainian responses to the Russian information war were initially slow, but intensified after 2014 with the limitation, sanction and direct prohibition of Russian media. In 2015, Ukraine cut its analogue cable connections with Russia, these having permitted Kremlin-aligned media to reach the Ukrainian population.

The volume of false information relative to the conflict with Ukraine increased in the weeks leading up to the invasion in February 2022, with videos of false Ukrainian attacks on Russian objectives in the separatist regions of Donetsk and Lugansk (Russian Analytical Digest, 2022). For its part, Ukraine has launched its own information campaigns to counteract the pro-Russian version and spread narratives that favour its interests. As part of this effort to transfer the conflict to the information arena, President Volodimir Zelenski has emerged as an essential actor in the field of communication through the recording of daily videos striving to unify the country, give legitimacy to his actions within the framework of the armed conflict and gain the support of the international community. Zelenski has participated in the fight against fake news concerning his whereabouts, posting videos of himself moving through the streets of Kyiv.

It is in this context of the production of prodigious amounts of fake content that we see the emergence of fact-checking journalism dedicated to the checking of any false news in circulation, especially, in the digital world, through the implementation of collaborative projects promoted by the International Fact-Checking Network (IFCN). International cooperation by fact-checkers grew during the infodemic provoked by Covid-19 and has been reinforced by the Russian-Ukrainian War. The #UkraineFacts project, which brings refutations relative to the war together on a single website (https://ukrainefacts.org), came into being in light of the speed with which disinformation about the conflict is spread, its international distribution moving faster than that observed for disinformation about the pandemic. Another difference from the health crisis is the predomination of image-based fake news, as almost all the hoaxes checked worldwide take a visual format (photographs, photomontages, memes, screen-shots and videos). This paper is focused on the study of visual disinformation by the two countries locked in this conflict and the corresponding response by fact-checking agencies.

2. Methodology

2.1. Objectives and hypotheses

This study sets out the following objectives:

-

O1. Characterise the visual disinformation related to the Russo-Ukrainian conflict produced and checked during the first four months of 2022.

-

O2. Find the international reach of that content by the quantification of the number of countries where each of the hoaxes produced and checked had circulated.

-

O3. Analyse the reaction of the international fact-checking agencies from the time taken to check the false images related to the war.

-

O4. Compare the disinformation strategies of the two sides in the conflict (Russia vs. Ukraine).

A large part of the fake content which the fact-checkers look at is based on the decontextualised utilisation of photos and videos, as well as fragments from series, films or videogames shared as if they were real (Morejón-Llamas et al., 2022). Due to the ease with which visual items are produced, false visual content is used particularly commonly in strategic disinformation utilising fake contexts (Salaverría et al., 2020; Rodríguez-Pérez, 2021). Thus, our first hypothesis (H1) asserts that fake context is the commonest information disorder in the fake images associated with the conflict.

Numerous studies into the taxonomy of the disinformation related to Covid-19 show that social networks are the chief means of disinformation distribution. Specifically, Facebook is pointed to as the channel where the highest proportion of fake news on the pandemic was circulated (Sánchez-Duarte & Magallón-Rosa, 2020; Herrero-Diz et al., 2020; Noain-Sánchez, 2021). Thus Hypothesis 2 (H2) asserts that Facebook was the most commonly used platform for the propagation of visual disinformation at the beginning of the Russo-Ukrainian War.

Hypothesis 3 (H3) determines that the highest intensity of disinformation occurred at the beginning of the armed conflict, coinciding with Russia’s invasion of Ukraine (the last week of February and the first fortnight of March). This hypothesis is based on studies such as that of García-Marín & Merino-Ortego (2022) into Covid-19, which shows a relationship between the production of a greater proportion of disinformation and the early moments of the health crisis.

As noted in the previous epigraph, Russia has a noted track record in the utilisation of hybrid war strategies employing disinformation to destabilise the domestic and foreign policy of certain western powers. Apart from the studies previously mentioned, these campaigns have been widely documented and analysed by, among others, Doroshenko & Lukito (2021), Alieva et al. (2022), and Innes & Dawson (2022). Therefore, Hypothesis 4 (H4) propounds that the number of pro-Russian items of disinformation favouring the Kremlin’s interests is greater than the disinformation in favour of Ukraine.

Hypothesis 5 (H5) observes the swift reaction of fact-checkers to Russo-Ukrainian disinformation. A priori, it is estimated that these agencies take no more than 6 days to perform and post their verification. This estimation is based on previous studies which conclude that the average checking time for disinformation (the time passing between publication and the refutation posted by the fact-checkers) was 5.42 days at the beginning of the Covid-19 crisis. As a secondary hypothesis related to H5, it is established that there are no significant differences in the checking time for the disinformation of the two opposing sides (H5a).

In their study of fake news produced in the framework of the Catalan conflict caused by the October 1, 2017 referendum, Aparici et al. (2019) conclude that the two sides involved in the crisis (unionists and independentists) employed different disinformation stratagems to get their ideological frameworks across to the population. While the unionists were observed to create a greater proportion of fake news on webs and blogs, hoaxes spread on social networks were more frequent from the independentists. Along the same lines, Hypothesis 6 (H6) affirms that the Russians and Ukrainians adopted different disinformation strategies. The diverging models include the utilization of different information disorders (H6a), dissimilar platforms (H6b), narratives adapted to the agenda of each side (H6c) and different timing (H6d).

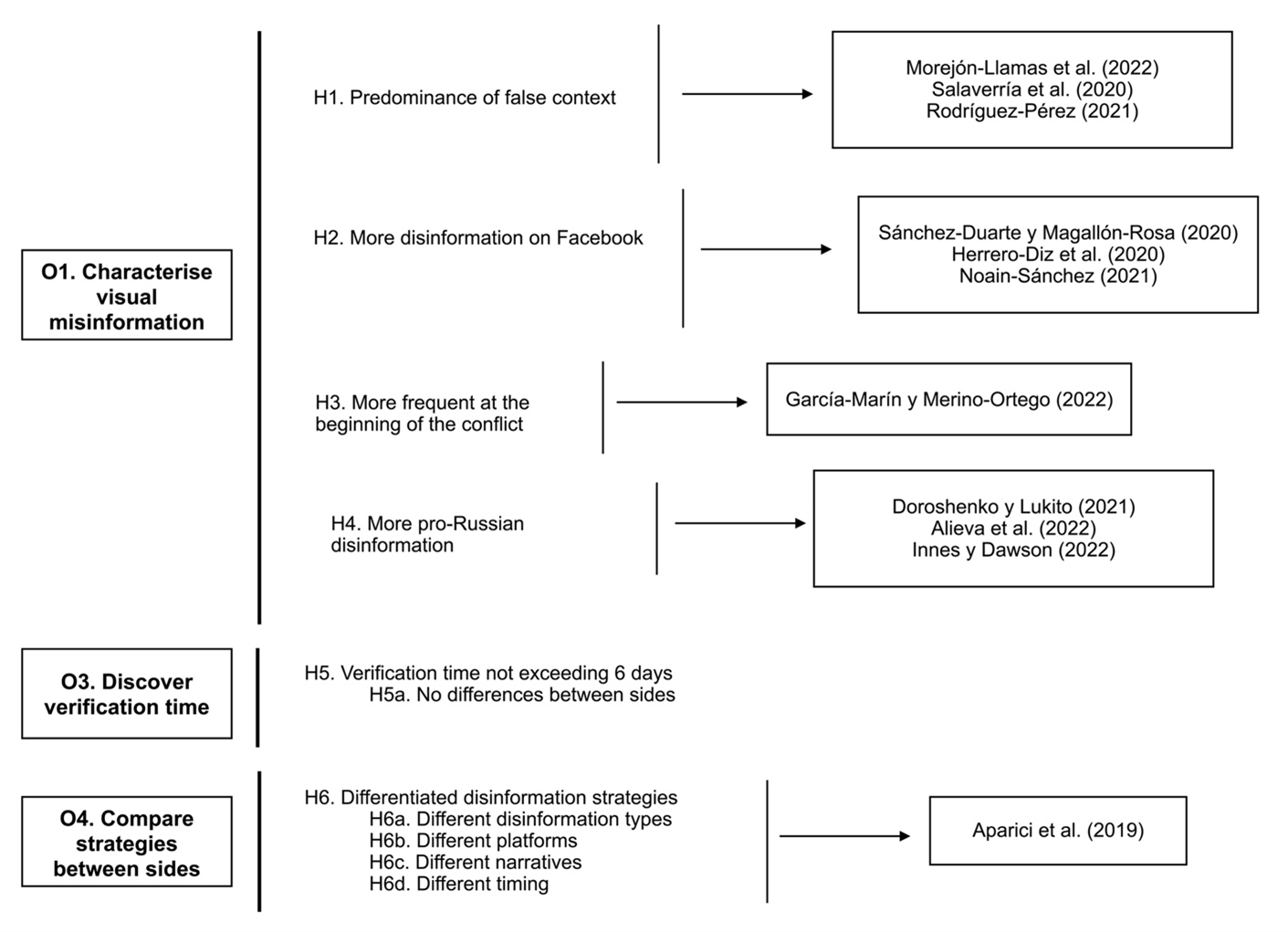

It can be appreciated that the first four hypotheses (H1-H4) relate to O1, concerning the characterisation of visual disinformation about the conflict. H5 is related to O3, about the time required by fact-checkers to check false content. Finally, H6 is related to O4, concerning the comparison of the two sides’ disinformation strategies on fake visual images. Image 1 synthesises the relationship between the objectives and hypotheses, as well as the scientific literature that supports them.

Image 1

Correlation of objectives and hypotheses

Source: created by the authors

2.2. Research design

In order to confirm these hypotheses, an exclusively quantitative study was performed of all the visual disinformation gathered and checked on the data base of the collaborative fact-checking project #UkraineFacts. No sampling took place, but all of the corpus corresponding to the object of the study over the chosen time period was examined. The timeframe established was from January to April 2022, these being the months of the conflict with the greatest intensity of disinformation. From May onwards, fact-checking and the posting of refutations by the collective project was considerably reduced (31 checks in May, 30 in June, 15 in July and only 1 in August). A total of 326 false images were analysed by the application of the code book shown in Table 1.

2.3. Data analysis

The study was based on descriptive and inferential statistics. As is the common practice in studies grounded on inferential statistical methods, in order to decide on the execution of parametric or non-parametric calculations, the Kolmogorov-Smirnov test was applied, with Lilliefors’s correction of statistical significance, to the two quantitative variables. The test observed an absence of normality in the value distribution in both the “Number of countries” variable [D(326)=.225, p<.001] and in the “Checking time” variable [D(312)=.248, p<.001], therefore it was decided to apply non-parametric instruments for the contrast of hypotheses: the Kruskal-Wallis test for the polytomous variables, the Mann-Whitney U test for the dichotomous variables, and Spearman’s correlation coefficient for the correlational studies.

The results of these statistical tests will be presented utilising the usual format following APA guidelines, which compiles the degrees of freedom (only in the polytomous qualitative variables which allow several categories), sample size, statistical value and the degree of statistical significance (p-value). To be able to assume the existence of significant differences between the different categories of variables, the p-value must be below 0.05, as the common result of this type of analysis. For example, in the Kolmogorov-Smirnov test applied to the checking time previously expressed, the letter D marks the type of test carried out (Kolmogorov-Smirnov), the value 312 refers to the number of images whose checking time could be determined (that is, the sample), the value 0.248 is the test statistic and 0.001 is the degree of statistical significance (in this case, it gives statistically significant differences, being less than 0.05). The statistical work was carried out using SPSS v. 26 software.

3. Results

3.1. Anatomy of visual disinformation in the Russo-Ukrainian conflict

Concerning O1, in the context of the war between Russia and Ukraine and over the period analysed, false context is the most common form of information disorder, present in 63.19% of the images checked (n=206) (Table 2), thereby confirming H1. Invented content represents 20.55% of the sample (n=67). The two categories make up 83.74% of the total. Social networks are the platforms where the highest number of false images about the conflict have been checked. Facebook and Twitter are the services where the bulk of the content circulates (together they make up 84.65% of the checks). The majority of the disinformation is shared through Facebook, with a frequency far greater than that of Twitter (54.90% against 29.75%), thereby confirming H2.

Of the four months analysed, March saw the highest number of verified images (49.38%, n=161), followed by February (36.19%; n=118). The start of the invasion in late February meant that March registered a higher percentage of disinformation, as it appeared in notably lesser quantities before February 24, the date of the Russian attack. In fact, of the 118 checked in February, a total of 90 (76.27%) came after the invasion date (from February 24 to 28), making that last week in February the week with most checks. The second most active week was the first week of March (n=51), followed by the second week of the same month (n=34). From the third week of March, there was no instance of a week with over 25 items checked. The amount of fake news fell considerably in April to only 45 items (13.80%). According to this data, we can confirm the validity of H3.

False stories of military attacks or decisions represent 28.22% of the sample. Fake reactions by the people affected (19.01%) constitute the second most prominent narrative category. Those narratives that distort or degrade the image of certain key actors or collectives in the conflict are in third (15.95%). After these groups, we find fake reactions by the international community to acts by the two sides (12.57%).

Photographs or static images (51.53%) are slightly more frequent than video disinformation (48.46%). Pro-Russian visual content (49.07%) is more prevalent than images in favour of Ukraine (or against Russian interests), which make up 44.78% of the sample. In percentage terms, pro-Russian checked content is 4.29 percent higher than the figure for disinformation favouring the Ukrainian agenda, a figure that validates H4.

Finally, each item of disinformation regarding the conflict was detected and checked during the months analysed in an average of 5.94 countries (DT=5.67), allowing the affirmation that each of these false images circulated in, at least, 6 countries on average (O2). Regarding O3, the fact-checking agencies that make up the IFCN took an average of 4.57 days to check the items in question (DT=5.78), which confirms H5.

Descriptive statistics (frequencies) of the disinformation relative to the conflict between Russia and Ukraine between January and April, 2022

Source: created by the authors

3.2. Spread of disinformation

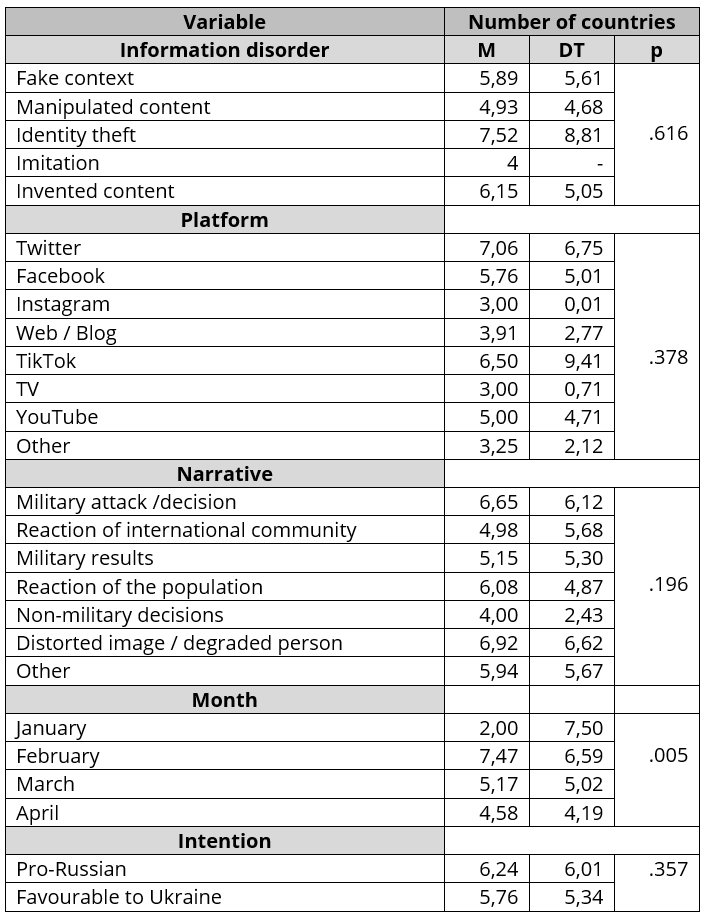

In order to achieve greater granularity in the data on the geographical propagation of visual disinformation concerning the conflict (an aspect related to O2), an analysis was performed of the association between the number of countries in which the fake news was distributed (dependent variable) and the following independent variables: (1) information disorders, (2) distribution platforms, (3) narratives, (4) months of diffusion and (5) the intention behind the disinformation (in favour of the Russian or Ukrainian agenda) (Table 3).

Fake news based on identity theft of individuals or media outlets is the type of information disorder spread over the largest number of countries (M=7.52), followed by invented content (M=6.15) and fake context (M=5.89). Kruskal-Wallis tests determined the absence of significant differences between the values of the categories of this variable [H(4)=3.551, p=.616].

Disinformation disseminated via Twitter (M=7.06) achieved greater propagation than content distributed by other platforms, TikTok being the social media service with the second greatest reach (M=6.50). Nor were statistically significant deviations observed in the number of countries where the disinformation circulated in terms of the platform utilised [H (7) =7.512, p=.378].

False narratives that strive to offer a denigrating or distorted image of key individuals or collectives involved in the conflict (M=6.92) and fake military attacks or decisions (M=6.65) are the stories broadcast in the greatest number of countries, although relevant differences are not observed between the varying narrative categories [H (6) =8.624, p=.196].

The highest circulation of visual disinformation among countries took place in February (M=7.47), with a progressive decrease over the following months. In March, disinformation about the conflict was detected in an average of 5.17 countries, while the average registered in April was 4.58. In this case, highly relevant differences were detected in the number of countries reached in terms of when the fake image was produced [H(3)=14.854, p=.005]. Disinformation being published in February was associated (though with low intensity) with its propagation in a greater number of countries and, therefore, greater reach (rho(323)=.183, p<.001). These figures reinforce the validity of H3, which links higher disinformation pressure with the start of the conflict, the Russian attack on Ukraine.

Finally, pro-Russian visual content was detected and checked in more countries (M=6.24) than that favourable to Kyiv (M=5.76), Mann-Whitney’s U test determined the absence of notable differences in the reach of disinformation from the two sides (p=.357).

Average and standard deviation in the number of countries where disinformation was checked

Source: created by the authors

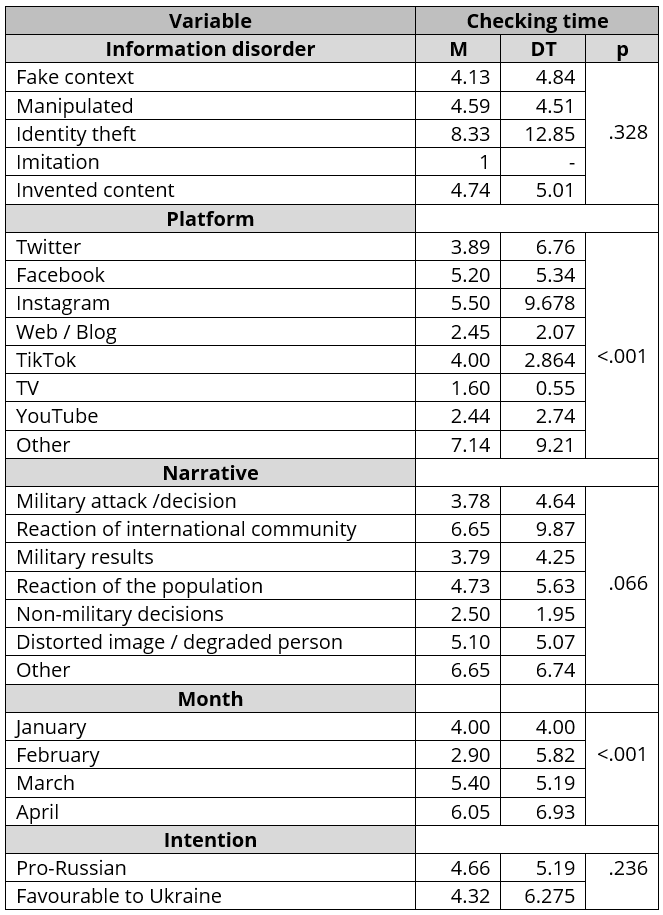

3.3. Fact-checkers' reaction. Analysis of verification time

Regarding O3, the fact-checking agencies which make up the IFCN reacted swiftly to the volume of disinformation generated over the first months of the conflict. As mentioned above, the average time to check the content included in the sample was 4.57 days, less than the 6 days proposed in H5. Identity theft is the information disorder that registers the longest checking time (M=8.33) ahead of invented content (M=4.74) (Table 4). Fact-checkers’ speed in checking false contexts (the most common content) is worthy of mention, it being the type of information disorder which requires least time to check (M=4.13). Note that the figure relative to imitation is not considered, due to its extremely low frequency. Noteworthy differences in checking times relative to the type of disinformation were not observed [H(4)=4.624, p=.328].

The amount of time dedicated to checking content on Facebook is relevant (M=5.20), being far more than that needed to check Twitter messages (M=3.89). In this sense, major deviations were detected in the time required to check images on the various platforms [H(7)=24.900, p<.001]. The propagation of visual disinformation on Facebook is statistically associated with a greater checking time (though the association is weak) (rho(310)=.248, p<.001).

False reactions by the international community to events in the conflict require a longer checking time (M=6.65), followed by the narratives that distort the image of key actors, which are checked in 5.10 days. The differences in checking time between the various narrative categories are on the limit of statistical significance [H(6)=11.807, p=.066].

Conversely, highly relevant deviations were detected in checking time depending on the month in which the fake content was propagated [H(3)=37.145, p<.001]. If disinformation in February took less than 3 days to check (M=2.90), in April the number of days had tripled (M=6.05), despite the gradual reduction in the amount of disinformation related to the war.

Fake images in favour of Russian interests took longer to check (M=4.66) than those promoting the Ukrainian agenda (M=4.32), although the differences are minimal and never statistically significant (p=.236), which serves to validate H5a.

Average and standard deviation of checking time

Source: created by the authors

3.4. Differentiated disinformation strategies

This study observes notable differences in the characterisation of the two sides’ visual disinformation, which implies that each of them has adopted different disinformation strategies (O4). Such divergence is established in (1) the type of information disorder, (2) the use of differentiated distribution strategies (platforms), (3) the utilisation of distinct narratives and (4) the timing of the production of fake news.

3.4.1. Information disorders

Ukrainian disinformation can be seen to employ a larger number of false contexts, as 72.60% of the misleading images in Kyiv’s favour fall into that category, as opposed to the 56.25% of the Russian images (Table 5). However, Russia utilises twice as much invented content (26.25%) as Ukraine (13.70%). Statistical tests of the hypotheses utilising chi-squared distribution tests confirm these differences [X2(4, N=306) = 11.185, p=.025]. According to these figures, H6a is validated.

3.4.2. Platforms

Ukrainian disinformation makes greater use of Facebook (59.59%), being 10 points above Russian use (49.68%). The Russians employ web pages and blogs more frequently (5.66%). Practically all the false images favourable to Ukraine are distributed on Facebook and Twitter. These two platforms make up 92.46% of Ukrainian content. If the concentration of messages on a small number of platforms is the logic characterising the Ukrainian side, a more expansive strategy can be seen on the Russian side, employing a greater variety of media. Russian fake news on the two major platforms –Facebook and Twitter– represents 78.61%, 13.85 percent less than that of Ukraine. The chi-squared tests verify the differences in the two sides’ distribution strategies [X2(7, N=305) = 16.204, p=.023], which serves to confirm H6b.

3.4.3. Narratives

The largest divergences are found in the utilisation of homegrown narratives adapted to each country’s agenda [X2(6, N=306) = 38.481, p<.001]. Therefore, H6c is validated. It is evident that stories of fake attacks are more frequent in Ukrainian visual disinformation (36.30%) than in Russian (18.75%). However, fake narratives about the international community’s reaction to Russia’s attack are more prevalent in the disinformation favourable to Moscow (18.12% against 7.53% on the Ukrainian side). The objective of this fake news promoted by Russian propaganda is to persuade public opinion of certain countries’ support of the invasion, thus legitimating the Kremlin’s positions.

There are also notable differences in the use of stories that degrade the image of key individuals in the conflict. This narrative is particularly common on the Russian side (25.00% against 6.85% by the Ukrainians), in order to associate Zelenski (Image 2) with drug consumption or to position the Ukrainian people close to neo-Nazi postulations.

Image 2

False narrative denigrating the Ukrainian President Volodimir Zelenski

Source: Maldita.es

3.4.4. Timing

There are also highly relevant differences regarding the prevalence of the two sides’ visual disinformation in each of the months analysed [X2(3, N=306) = 25.810, p<.001]. As time passed, fake news from the Ukrainian side became less frequent, while Russian disinformation grew in proportion. Almost half of Ukrainian misleading images (48.63%) were distributed in February, against 25.62% of Russian ones. These figures validate H6d. The confirmation of the sub-hypotheses H6a-H6d leads us to totally corroborate the solidity of H6.

Comparison of the disinformation from the two sides

Source: created by the authors

4. Discussion and conclusions

This study joins the existing literature on war propaganda which has, as indicated in the opening section, been utilising the available visual media for decades, at each historical moment, to achieve its objectives. Fake images related to the Russo-Ukrainian conflict –distributed in previous wars as photographs, film or TV– are currently propagated by social networks, with Facebook and Twitter as the principal channels.

The data gathered confirms the results of previous studies which show the power of fake context as a preferential visual disinformation strategy (Salaverría et al., 2020; Rodríguez-Pérez, 2021), the predominant use of Facebook as a distribution platform (Sánchez-Duarte and Magallón-Rosa, 2020; Herrero-Diz et al., 2020; Noain-Sánchez, 2021), the greater frequency of false content at the beginning of the crisis —in this case, the days immediately after the commencement of the invasion— (García-Marín & Merino-Ortego, 2022), fact-checkers’ swift response and the utilisation of different strategies by the two sides in their disinformation output (Aparici et al., 2019).

The low prevalence of fake military results as a narrative is worthy of note. Historically, the use of war propaganda to exaggerate one’s successes and minimise one’s losses in order to raise domestic morale has been abundant. The low frequency of this narrative category suggests that disinformation in this context may be addressed more towards foreign audiences, with two possible objectives: (1) to discredit the enemy’s image abroad by attributing atrocities, the disproportionate use of force or war crimes (2) to offer a false image of international support for your position in the conflict.

A certain balance can be seen in the number of checks performed on the two sides, though it cannot be determined if that reflects similar volumes of disinformation from both countries or rather that such equilibrium is fruit of fact-checkers’ efforts to check the same number of fake news items coming from the two sides. This aspect derives from the main limitation of studies of this type which, clearly, do not analyse the disinformation produced but that which has been detected and tested by the fact-checkers. Therefore, there may be under-representation of content flowing through private instant messaging services such as WhatsApp or Telegram, sometimes undetectable for fact-checkers.

Utilisation of deepfakes is scarce —all but inexistent—, despite the concern such misleading videos have provoked. Quite the opposite, use of ‘cheapfakes’ still predominates (Paris & Donovan, 2019), these being “hoaxes created by users from the functions of their mobile devices, crude manipulation of pre-existing files or the simple addition of text to alter the original meaning of shared messages” (Gamir-Ríos & Tarullo, 2022, p. 98), their high frequency being due to their proven efficacy and low technical difficulty (Fazio, 2020).

One of the main contributions of this study is in determining the differences in the visual disinformation coming from the two sides. Pro-Russian fake news makes greater use of invented content and employs a larger number of distribution platforms. Fake stories of attacks are more common from Ukraine, while fake news about the international community’s reaction to the Russian attack is more frequent in the disinformation favourable to Moscow. Significant differences can also be observed in the timing of disinformation: as the conflict has developed, Ukrainian fake news is less frequent, while Russian disinformation has grown in proportion.

This study allows the profiling of types of malicious visual content which gained greater international circulation at the beginning of the conflict, as well as characterization of disinformation which was more quickly checked. Content based on identity theft, be that of individuals or media outlets, is the type of information disorder propagated in the largest number of countries. Narratives that seek to offer a distorted image of key persons or actors in the war had greater international propagation, as did visual disinformation shared on Twitter, that favouring Russian interests and that distributed during the days before the conflict started (the last week of February). As for the time required for checks, fact-checkers were swifter with fake contexts, disinformation propagated via Twitter, that distributed in February, that favourable to Ukraine and stories of non-military decisions.

The data on fact-checking times shows fact-checkers’ effectiveness in testing fake content. The quick reaction of these agencies was fundamental in debunking hoaxes before they were taken to be true by a large part of the population. Nevertheless, authors such as Wardle (2019) question what the ideal moment for posting checks is, as, should they be published too soon, the fact-checker may actually contribute to the propagation of the misleading fact.

Finally, it is worth noting the considerable increase in checking times as the conflict has gone on, and, paradoxically, the volume of disinformation has diminished. Future studies should confirm this tendency (also observed in the Covid-19 infodemic) and discern what could be the cause: whether it derives from a more complex production of fake news as the conflict has unfolded or if, on the contrary, it is the consequence of lesser attention being paid to disinformation related to the conflict which, over the months, has become less important in the daily activities of the fact-checking entities.

Authors’ contribution

David García-Marín: Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Supervision, Visualization, Writing- original draft. Guiomar Salvat-Martinrey: Data curation, Formal analysis, Investigation, Methodology, Writing- review and editing. All authors have read and agree with the published version of the manuscript. Conflicts of interest: The authors declare that they have no conflict of interest.

Funding

This work is supported by the Jean Monnet Chair EUDFAKE: EU, disinformation, and fake news (Call 2019 – 610538-EPP1-2019-1-ES-EPPJMO-CHAIR) funded by the Erasmus+ program of the European Commission.

References

Alieva, Iuliia; Moffitt, J.D.; & Carley, Kathleen. (2022). How disinformation operations against Russian opposition leader Alexei Navalny influence the international audience on Twitter. Social Network Analysis and Mining, 12(80). https://doi.org/10.1007/s13278-022-00908-6

Aparici, Roberto; García-Marín, David; & Rincón-Manzano, Laura. (2019). Noticias falsas, bulos y trending topics. Anatomía y estrategias de la desinformación en el conflicto catalán. Profesional de la información, 28(3), e280313. https://doi.org/10.3145/epi.2019.may.13

Brennen, J.Scott; Simon, Felix; Howard, Philip.N.; & Kleis-Nielsen, Rasmus. (2020, 7 de abril). Types, sources, and claims of Covid-19 misinformation. Reuters Institute. https://cutt.ly/fX4jt1x

Colom-Piella, Guillem. (2020). Anatomía de la desinformación rusa. Historia y Comunicación Social, 25(2), 473-480. https://doi.org/10.5209/hics.63373

Díaz Benítez, Juan José (2013). Propaganda bélica en la gran pantalla: la incursión de Makin (1942) a través de la película Gung Ho! Historia Actual Online, 31, 53-63. https://cutt.ly/k0VL0r5

Doroshenko, Larissa; & Lukito, Josephine. (2021). Trollfare: Russia’s Disinformation Campaign During Military Conflict in Ukraine. International Journal of Communication, 15, 4662–4689. https://cutt.ly/DX4jhLA

Erlich, Aaron; Garner, Calvin; Pennycook, Gordon; & Rand, David G. (2022). Does Analytic Thinking Insulate Against Pro-Kremlin Disinformation? Evidence From Ukraine. Political Psychology. https://doi.org/10.1111/pops.12819

Erlich, Aaron; & Garner, Calvin. (2021). Is pro-Kremlin Disinformation Effective? Evidence from Ukraine. The International Journal of Press/Politics. https://doi.org/10.1177/19401612211045221

Fazio, Lisa. (2020, 14 de febrero). Out-of-context photos are a powerful low-tech form of misin- formation. The Conversation. https://cutt.ly/qX7qM5o

Gamir-Ríos, José; & Tarullo, Raquel. (2022). Predominio de las cheapfakes en redes sociales. Complejidad técnica y funciones textuales de la desinformación desmentida en Argentina durante 2020. AdComunica, (23), 97-118. https://doi.org/10.6035/adcomunica.6299

García-Marín, David, & Merino-Ortego, Marta. (2022). Desinformación anticientífica sobre la COVID-19 difundida en Twitter en Hispanoamérica. Cuadernos.Info, (52), 24-46. https://doi.org/10.7764/cdi.52.42795

Golovchenko, Yevgeniy; Hartmann, Mareike; & Adler-Nissen, Rebecca. (2018). State, media and civil society in the information warfare over Ukraine: citizen curators of digital disinformation, International Affairs, 94(5), 975–994. https://doi.org/10.1093/ia/iiy148

Herrero-Diz, Paula; Pérez-Escolar, Marta; & Plaza Sánchez, Juan Francisco. (2020). Desinformación de género: análisis de los bulos de Maldito Feminismo. Revista ICONO 14. Revista Científica de Comunicación y Tecnologías Emergentes, 18(2), 188-216. https://doi.org/10.7195/ri14.v18i2.1509

Hjorth, Frederik; & Adler-Nissen, Rebecca. (2019). Ideological Asymmetry in the Reach of Pro-Russian Digital Disinformation to United States Audiences, Journal of Communication, 69(2), 168–192, https://doi.org/10.1093/joc/jqz006

Inkster, Nigel. (2016). Information Warfare and the US Presidential Election, Survival, 58(5), 23-32. https://doi.org/10.1080/00396338.2016.1231527

Innes, Martin; & Dawson, Andrew. (2022), Erving Goffman on Misinformation and Information Control: The Conduct of Contemporary Russian Information Operations. Symbolic Interaction. https://doi.org/10.1002/symb.603

Jankowicz, Nina. (2020). How to Lose the Information War: Russia, Fake News, and the Future of Conflict. Bloomsbury Publishing.

López-Olano, Carlos; & Fenoll, Vicente. (2019). Posverdad, o la narración del procés catalán desde el exterior: BBC, DW y RT. Profesional de la información, 28(3), e280318. https://doi.org/10.3145/epi.2019.may.18

López Torán, José Manuel. (2022). Y la guerra entró en los hogares: noventa años de propaganda y fotografía bélica (1855-1945). Historia & Guerra, 2, 17-43. https://doi.org/10.34096/hyg.n2.11061

Magdin, Radu. (2020). Disinformation Campaigns in the European Union: Lessons Learned from the 2019 European Elections and 2020 COVID-19 Infodemic in Romania. Romanian Journal of European Affairs 20(2). https://cutt.ly/FX7q4lt

Morejón-Llamas, Noemí; Martín-Ramallal, Pablo; & Micaletto-Belda, Juan-Pablo (2022). Twitter content curation as an antidote to hybrid war during Russia´s invasion of Ukraine. Profesional de la información, 31(3), e310308. https://doi.org/10.3145/epi.2022.may.08

Noain-Sánchez, A. (2021). Desinformación y Covid-19: Análisis cuantitativo a través de los bulos desmentidos en Latinoamérica y España. Estudios sobre el Mensaje Periodístico, 27(3), 879-892. https://doi.org/10.5209/esmp.72874

Paris, Britt; & Donovan, Joan. (2019, 18 de septiembre). Deepfakes and cheapfakes: The manipulation of audio and visual evidence. Data & Society. https://cutt.ly/tX7wy1t

Rechtik, Marek; & Mares, Miroslav. (2021). Russian disinformatio threat: comparative case study of Czech and Slovak approaches. Journal of Comparative Politics, 14(1), 4-19. https://cutt.ly/WX7waLr

Richards, Julian. (2021). Fake news, disinformation and the democratic state. Revista ICONO 14. Revista Científica de Comunicación y Tecnologías Emergentes, 19(1), 95-122. https://doi.org/10.7195/ri14.v19i1.1611

Robertson, Emily. (2014). Propaganda and ‘manufactured hatred’: A reappraisal of the ethics of First World War British and Australian atrocity propaganda. Public Relations Inquiry, 3(2), 245-266. https://doi.org/10.1177/2046147X14542958

Rodríguez-Pérez, Carlos. (2021). Desinformación online y fact-checking en entornos de polarización social: El periodismo de verificación de Colombiacheck, La Silla Vacía y AFP durante la huelga nacional del 21N en Colombia. Estudios sobre el Mensaje Periodístico, 27(2), 623-637. https://doi.org/10.5209/esmp.68433

Russian Analytical Digest (2022, 28 de abril). Russian information warfare. Center for Security Studies. https://cutt.ly/MX7whmR

Salaverría, Ramón; Buslón, Nataly; López-Pan, Fernando; León, Bienvenido; López-Goñi, Ignacio; & Erviti, María-Carmen. (2020). Desinformación en tiempos de pandemia: tipología de los bulos sobre la Covid-19. Profesional de la información, 29(3), e290315. https://doi.org/10.3145/epi.2020.may.15

Sánchez-Duarte, José Manuel; & Magallón Rosa, Raúl. (2020). Infodemia y COVID-19. Evolución y viralización de informaciones falsas en España. Revista Española de Comunicación en Salud, 31-41. https://doi.org/10.20318/recs.2020.5417

Pérez-Ruiz, Andrea; & Aguilar-Gutiérrez, Manuel (2019). Propaganda, manipulación y uso emocional del lenguaje político. En R. Aparici y D. García-Marín (Coords.), La posverdad, una cartografía de los medios, las redes y la política (pp. 97-113). Gedisa.

Pizarroso Quintero, Alejandro. (2005). Nuevas guerras, vieja propaganda (de Vietnam a Irak). Cátedra.

Pizarroso Quintero, Alejandro. (2008). Prensa y propaganda bélica 1808-1814. Cuadernos dieciochistas, 8, 203-222. https://cutt.ly/10VLobz

Pupcenoks, Juris; & Seltzer, Eric James. (2021). Russian Strategic Narratives on R2P in the ‘Near Abroad’. Nationalities Papers, 49(4), 757-775. https://doi.org/10.1017/nps.2020.54

Thompson, Oliver. (1999). Easily Led. A History of Propaganda. Sutton Publishing.

Van der Vet, Feek. (2021). Spies, Lies, Trials, and Trolls: Political Lawyering against Disinformation and State Surveillance in Russia. Law & Social Inquiry, 46(2), 407-434. https://doi.org/10.1017/lsi.2020.36

Wardle, Claire (2019). First draft’s essential guide to understanding information disorder. First Draft News. https://cutt.ly/IX4jTXf

Ziegler, Charles E. (2018). International dimensions of electoral processes: Russia, the USA, and the 2016 elections. International Politics, 55, 557–574 https://doi.org/10.1057/s41311-017-0113-1

Author notes

* Assistant Professor at the Department of Journalism and Corporate Communication, Faculty of Communication Sciences

** Associate Professor PhD at the Department of Journalism and Corporate Communication, Faculty of Communication Sciences

Additional information

Translation to English

:

Brian O´Halloran

To cite this article

:

García-Marín, David; & Salvat-Martinrey, Guiomar. (2023). Disinformation and war. Verification of false images about the Russian-Ukrainian conflict. ICONO 14. Scientific Journal of Communication and Emerging Technologies, 21(1). https://doi.org/10.7195/ri14.v21i1.1943

ISSN: 1697-8293

Vol. 21

Num. 1

Año. 2023

Disinformation and war. Verification of false images about the Russian-Ukrainian conflict

David García-Marín 1, Guiomar Salvat-Martinrey 1